5 Subtle Voice Cloning Scams to Watch

TL;DR

- Voice cloning uses AI to mimic real voices, and scammers are already using it to impersonate loved ones and financial institutions.

- A few proactive digital hygiene habits can dramatically reduce your exposure without making your life harder.

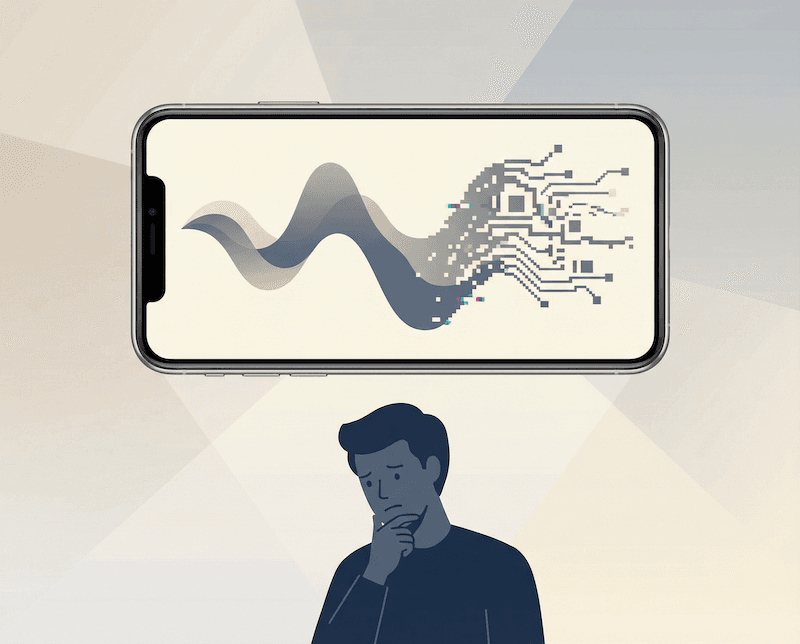

Imagine getting a call from your sister. Her voice sounds shaky. She says she needs money right now.

It feels real because it sounds real. But it is not her.

This is voice cloning. And it is no longer futuristic.

This technology uses artificial intelligence to replicate a person’s speech patterns, tone, and inflection. With just a short audio sample, AI systems can generate speech that sounds convincingly human.

While there are legitimate uses for this innovation, fraud is rising alongside it. Financial institutions such as BECU have warned that scammers are leveraging AI-generated audio to impersonate family members and financial representatives.

What Is Voice Cloning, Really?

At its core, voice cloning is a machine learning process. AI analyzes recorded speech, identifies vocal patterns, and then synthesizes new audio that mirrors the original speaker.

Modern ai voice cloning models need far less data than earlier versions. In some cases, just a few seconds of public audio from social media can be enough.

That shift matters because most of us have already posted our voices online.

Why These AI Voice Scams Are Surging

We live online. We share voice notes, TikToks, podcasts, and Instagram stories. Meanwhile, generative AI tools are becoming cheaper and more accessible.

According to McAfee’s reporting on AI voice scams, a growing number of adults say they have experienced or know someone who has experienced a voice impersonation attempt.

Culturally, we trust voices. We recognize them faster than faces. That instinct makes AI voice impersonation particularly powerful.

As a result, fraudsters exploit urgency. They create emotional pressure. They rely on your reflex to help first and verify later.

7 Hidden Voice Cloning Risks You Should Know

1. Emergency Impersonation

Scammers use synthetic voice technology to pose as a child, partner, or friend claiming they are in trouble. The request is usually financial and urgent.

2. Financial Institution Spoofing

A cloned voice can impersonate a bank representative. Because it sounds official, people share verification codes or account details.

3. Business Wire Fraud

In workplace settings, executives have reportedly been impersonated using ai voice cloning. Employees receive instructions to transfer funds quickly.

4. Social Engineering Layering

AI-generated voice scams are often combined with phishing emails or text messages. The follow-up call reinforces the lie and lowers skepticism.

5. Erosion of Voice Biometrics

Some services use voice recognition as authentication. However, advanced audio deepfake tools challenge that assumption.

6. Emotional Manipulation at Scale

Voice-based social engineering triggers deeper emotional reactions than text alone. The psychological impact can override rational judgment.

7. Permanent Digital Exposure

Once your voice is online, you cannot retract it. The long-term risk grows as synthetic speech systems improve.

Common Mistakes That Make You More Vulnerable

First, reacting emotionally without verifying. That is the primary success driver behind most AI impersonation scams.

Second, oversharing voice content publicly without understanding downstream risk.

Third, relying on voice alone as proof of identity. As Fidelity Bank notes in its consumer guidance, verification should always involve secondary confirmation methods.

These mistakes are understandable. They are human. However, they are also preventable.

How to Protect Yourself From Voice Cloning

You do not need to delete the internet. You need friction in the right places.

Create a Family Verification Phrase

Agree on a private phrase or question only your close circle knows. If a call feels urgent, ask for it.

Pause Before Acting

AI impersonation scams rely on speed. Take five minutes. Call the person back using a known number.

Layer Authentication

Avoid using voice as your only security factor. Use strong passwords, multifactor authentication, and a secure password manager.

Limit Public Audio When Possible

You do not need to disappear online. However, be intentional about what you share publicly and where.

Educate Your Inner Circle

The more your friends and family understand AI voice fraud, the less likely they are to fall for it.

The Bigger Picture: AI Is Not the Enemy

AI voice replication is not inherently malicious. It has accessibility benefits, creative uses, and legitimate business applications.

The real issue is digital hygiene. As AI advances, passive security becomes outdated. We need intentional security.

At TREASURELY, we believe cybersecurity should feel empowering. It should reduce friction, not create anxiety.

This technology is a reminder that identity is evolving. Therefore, our security habits must evolve too.

Stay Ahead, Not Scared

Technology will keep changing. However, your awareness is a powerful advantage.

Subscribe to the TREASURELY newsletter for modern digital safety tips, breach insights, and smarter ways to protect your digital life without losing convenience.

Because the goal is not paranoia. It is control.

Related Posts

Stay Ahead of Cyber Threats

Get weekly security tips, scam alerts, and digital privacy advice from TREASURELY.