Deepfake Video Scam: The Shocking New Face of Online Fraud

TL;DR

- A deepfake video scam uses AI-generated faces, voices, or livestreams to impersonate trusted people and pressure victims into sending money or credentials.

- These scams succeed through social engineering tactics like urgency, authority, and emotional manipulation.

- The safest response is behavioral: pause, verify through another channel, and never act on sensitive requests from a video alone.

Why the Deepfake Video Scam Is Growing So Quickly

Most people still think of deepfakes as internet curiosities — funny celebrity swaps or viral memes.

Cybercriminals see something very different.

To them, a deepfake video scam is one of the most efficient ways to borrow trust from a familiar face and turn it into money, credentials, or access. Instead of hacking systems directly, scammers exploit the weakest point in cybersecurity: human behavior.

Once a deepfake video convinces someone that a request is legitimate, the rest of the attack often unfolds quickly. A payment request, a password reset, or access to sensitive data can happen within minutes.

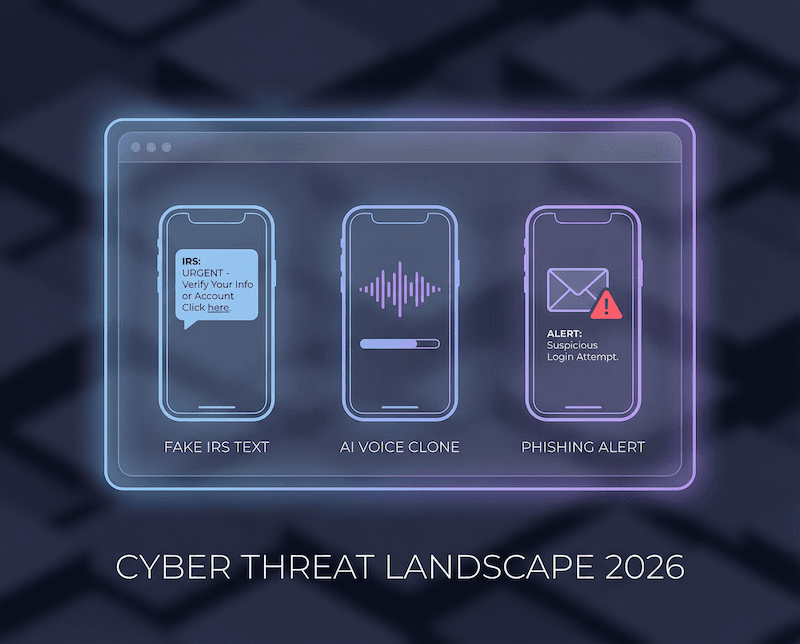

That’s why modern scams increasingly combine AI manipulation with traditional social engineering tactics like phishing attacks, identity impersonation, and credential harvesting.

In other words, the technology may be new, but the psychology behind the deepfake video scam is very old.

What a Deepfake Video Scam Looks Like in Real Life

A deepfake video scam rarely begins with obvious deception.

Instead, it usually appears in a context that feels familiar — a video meeting, livestream event, or personal message. The goal is to blend seamlessly into situations people already trust.

Once the victim believes the interaction is legitimate, scammers introduce urgency. The request might involve transferring funds, sending cryptocurrency, purchasing gift cards, or providing login credentials.

These actions are intentionally difficult to reverse.

The following real-world cases show how a deepfake video scam can affect corporations, online communities, and everyday individuals.

Case 1: The CFO Video Call That Led to a $25M Transfer

In early 2024, Hong Kong police revealed one of the most dramatic examples of a deepfake video scam in corporate history.

An employee at a multinational company joined what appeared to be a routine internal video call. On screen were several colleagues, including the company’s chief financial officer.

But none of them were real.

Every participant was an AI-generated deepfake created to pressure the employee into approving large financial transfers. By the end of the call, roughly $25 million had been sent to criminal accounts (SC Media).

The fraud only became clear later when the employee confirmed the transactions with real colleagues (The Guardian).

Why the deepfake video scam worked

Authority: The instructions appeared to come directly from leadership.

Social proof: Multiple fake participants reinforced the illusion of legitimacy.

Process camouflage: The request mirrored normal finance procedures.

The deepfake video scam didn’t break security systems. It simply blended into an existing workflow that employees already trusted.

Case 2: Nvidia’s CEO Used in a Deepfake Video Scam

Deepfake attacks are not limited to internal corporate environments.

In another 2024 incident, scammers created a livestream featuring an AI-generated version of Nvidia CEO Jensen Huang during the company’s GPU Technology Conference.

The stream promoted a cryptocurrency giveaway and instructed viewers to scan a QR code and send crypto for a supposed return.

Thousands of viewers encountered the fake broadcast before it was removed (PCWorld).

What made the deepfake video scam particularly convincing was timing. The fake livestream coincided with a real company event, allowing it to appear legitimate in search results.

Why the deepfake video scam spread

Brand trust: Viewers recognized the CEO and company.

Algorithm amplification: Platform search results boosted visibility.

Low-friction payments: QR codes shortened the gap between belief and action.

Modern cybercrime often spreads faster through platform algorithms than through traditional hacking techniques.

Case 3: A Celebrity Deepfake Video Scam Targeting a Family

Deepfake scams also target individuals outside corporate environments.

In Los Angeles, scammers impersonated General Hospital actor Steve Burton using AI-generated voice and video messages. The victim believed she was communicating with the celebrity directly.

Over time, the conversation evolved into repeated financial requests.

According to local reporting, the victim ultimately sent more than $81,000 through gift cards, bitcoin, and cash before realizing the relationship was fabricated (ABC7 Los Angeles).

The deepfake video scam worked because the attackers gradually built emotional trust before introducing financial pressure.

Why the scam succeeded

Emotional manipulation: Video messages created a sense of authenticity.

Isolation: Communication moved to private messaging platforms.

Escalation: Financial requests increased over time.

The Real Threat: Deepfake Video Scams Hack People

Across these cases, the technology itself is not the entire problem.

The real power of a deepfake video scam comes from social engineering.

Cybercriminals combine AI video manipulation with psychological triggers like urgency, authority, and emotional trust. Once those triggers are activated, people often act before verifying what they see.

These attacks frequently connect with other cybersecurity threats as well:

• phishing attacks

• credential stuffing

• account takeover attempts

• malware installation

• data breaches

• identity theft

A convincing deepfake video scam can become the entry point for much larger compromises involving passwords, devices, and financial accounts.

This is why strengthening digital identity protection is critical. Understanding how online identity works is the first step toward defending it.

Learn more about digital identity.

Common Mistakes That Make Deepfake Video Scams Work

Many victims of a deepfake video scam later report the same realization: something felt slightly off, but the moment moved too quickly to question it.

Several habits make these scams easier to execute.

Trusting video as proof

Video used to feel like the strongest form of identity verification. AI generation has fundamentally changed that assumption.

Acting under pressure

Requests framed as emergencies bypass careful thinking.

Reusing credentials

If scammers obtain login access during a deepfake interaction, reused passwords can lead to widespread account takeover.

Password reuse remains one of the biggest security risks online.

Ignoring verification steps

Most scams collapse the moment a victim checks through another communication channel.

How to Protect Yourself From a Deepfake Video Scam

The strongest defense against a deepfake video scam is not technical software.

It’s behavior.

Pause before responding

Any request involving money, credentials, or account access should trigger an automatic pause.

Switch communication channels

If a request arrives through video, verify through a separate method such as a direct phone call or known contact.

Create verification rules

Teams and families should define clear steps for approving sensitive requests.

Strengthen password hygiene

Using strong, unique credentials and a password manager reduces the damage if scammers obtain account access.

TREASURELY Perspective: Why Behavioral Security Matters

Cybersecurity conversations often focus on tools and technology.

But most successful attacks — including the deepfake video scam — target human decision-making rather than technical vulnerabilities.

That’s why TREASURELY focuses on strengthening everyday digital habits.

Better password management, stronger identity protection, and simple verification behaviors can stop many attacks before they escalate into data breaches or financial loss.

Security should not require expert knowledge. It should feel intuitive, empowering, and integrated into daily digital life.

Stay Ahead of Emerging Cyber Threats

Deepfake technology is evolving quickly, and scams will continue to adapt.

The best defense is staying informed.

Subscribe to the TREASURELY newsletter for:

• real-world cyber threat breakdowns

• breach alerts and security insights

• smarter password and identity protection tips

Digital safety should feel clear, not overwhelming. TREASURELY is here to help you stay one step ahead.

Related Posts

Stay Ahead of Cyber Threats

Get weekly security tips, scam alerts, and digital privacy advice from TREASURELY.